A data lake is often viewed as a catch-all solution for enterprises struggling with siloed data.

A data lake is a centralized storage system that enables you to store your organization’s data in its raw, unprocessed form, regardless of its format or structure. By keeping data in its raw format, data analysts, scientists, and other stakeholders can easily access the data they require for analysis and decision-making, unlike a traditional data warehouse that needs data to be pre-processed and organized.

However, with ever-increasing data growth, data lakes can quickly become overwhelming and challenging to manage. Are organizations in danger of drowning in their data lakes?

In this article, we’ll explore the potential risks and challenges associated with data lakes and provide strategies for managing and governing data to avoid getting caught up in the data deluge.

Is There Such Thing as “Too Much” Data?

This question is complex. While it’s true that having access to large amounts of data can be valuable for many organizations, it can also pose certain challenges and drawbacks that need to be addressed.

The Importance of Data Governance

One of the main challenges of dealing with large amounts of data is the issue of data governance. Without a proper strategy for managing data, a data lake can become unwieldy and difficult to manage, leading to data quality, security, and privacy issues.

Organizations must have a clear data governance strategy, defining policies and procedures for data storage, access, and usage.

They must also implement tools and processes for data cataloging, metadata management, and data lineage tracking. Regularly reviewing and cleaning up unnecessary data ensures their data lake remains manageable and useful.

The key to avoiding problems with a data lake with too much data is to approach data management as an ongoing process rather than a one-time project. Organizations can ensure data remains valuable by continually monitoring and managing their data lake.

What Does Ongoing Data Management Look Like?

Ongoing data management is a comprehensive and continuous process of collecting, organizing, analyzing, and maintaining data to ensure it remains accurate, relevant, and up-to-date. It covers all aspects of data management, including data entry, quality, integration, security, and governance.

Data Collection, Organization, and Analysis

Data management starts with collecting data from internal and external sources. Once collected, data is organized to make it easy to access and analyze. This includes creating data models, developing dictionaries, and establishing data standards and protocols.

Data analysis involves data analytics tools and techniques to identify significant patterns, trends, and insights within data. By analyzing data, organizations can gain valuable insights into customer behavior, market trends, and operational performance, enabling them to make informed business decisions that drive growth and profitability.

Data Security and Governance

Data security is another critical aspect of ongoing data management. Organizations must implement appropriate security measures such as firewalls, encryption, and access controls to protect sensitive data from unauthorized access.

Establishing data governance policies and procedures ensures that data is managed as a valuable asset and used in a way that is consistent with organizational goals and objectives. This involves creating data retention policies, privacy policies, and access and sharing procedures.

Is Software the Iceberg or the Lifeboat?

Data quality issues are a major concern for many organizations, as they can lead to inaccurate insights, flawed decision-making, and costly mistakes. While software solutions are often used to manage data quality, they can also contribute to the problem, particularly if they are outdated and unable to keep up with data’s increasing volume and complexity.

However, the right software solution with modern and adaptable AI capabilities can serve as a lifeboat, helping organizations to prevent data quality issues and avoid the ongoing need for data cleanup.

The Role of AI-Powered Software Solutions

One of the key advantages of modern AI-powered software solutions is their ability to learn from patterns and trends in the data and continuously adapt to changing data quality issues. They use machine learning algorithms to automatically identify and correct errors in data and detect patterns and anomalies that may indicate data quality issues.

In addition to their adaptability, they can automate tedious data quality reviews, freeing up some of your most skilled data professionals to focus on higher-impact work. For example, they can automatically flag data quality issues, route them to the appropriate teams for resolution, and update data quality metrics in real time.

By leveraging the power of AI, software solutions can help organizations overcome the challenges of poor data quality and prevent data quality issues from arising in the first place. These solutions can serve as a lifeboat, allowing organizations to navigate the complex waters of data management and emerge with accurate, reliable, and actionable data.

While outdated software solutions may contribute to poor data quality, modern and adaptable AI-powered software solutions offer a promising solution for preventing data quality issues and eliminating the need for constant data cleanup.

Final Thoughts: Data Management Best Practices When Using Data Lakes

Data lakes offer a powerful solution for organizations consolidating their data in one centralized location. However, as the volume and complexity of data continue to grow, organizations face the risk of drowning in their data lakes.

Organizations must implement effective data governance strategies and ongoing data management processes to mitigate these risks to ensure data quality, security, and privacy.

Additionally, leveraging modern AI-powered software solutions can help prevent data quality issues and automate data quality tasks, freeing up resources for more strategic activities.

Ultimately, by adopting a proactive approach to data management and leveraging technology, organizations can navigate the complex waters of data management and emerge with accurate, reliable, and actionable data.

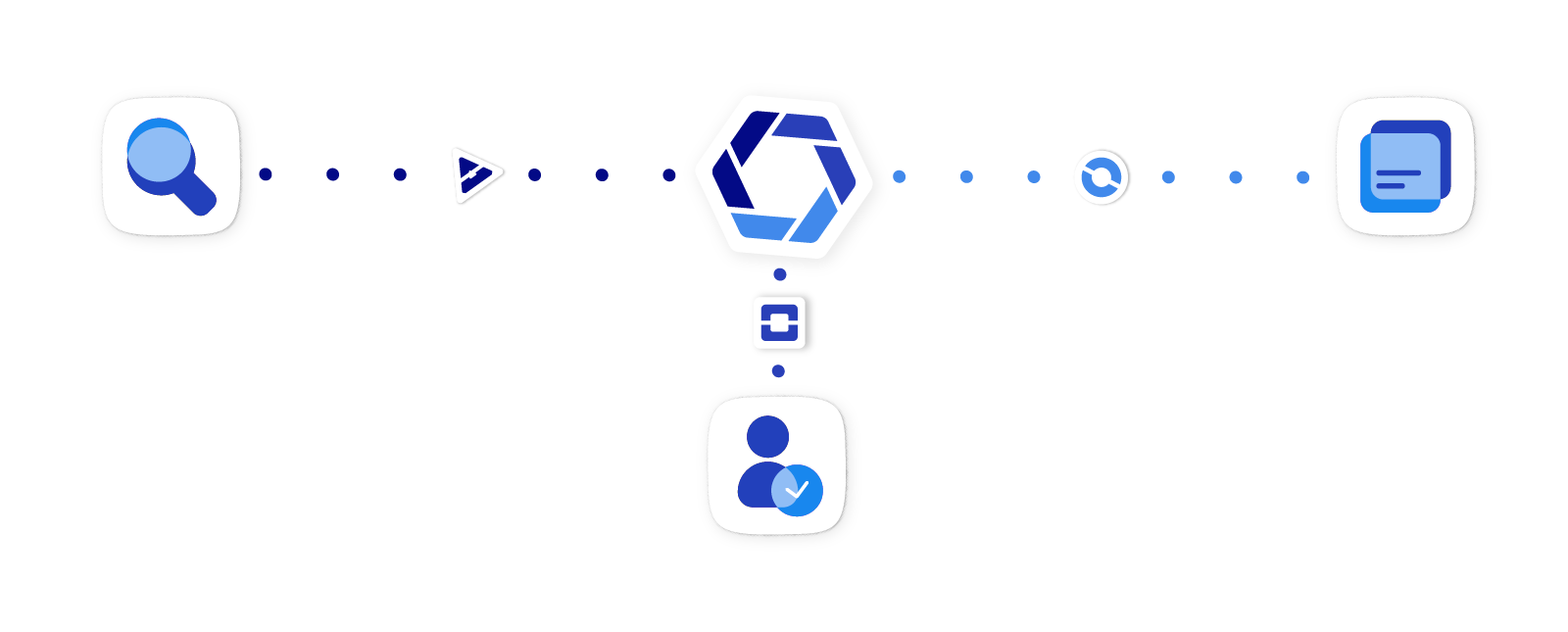

Take control of your data today with Kizen. Our advanced data management solutions and AI-powered software can help you prevent data quality issues, ensure data security, and streamline your data management processes.

We can help you navigate complex data management and emerge with accurate, reliable, and actionable data. Connect with us on our website, and we’ll schedule a time to chat.

Frequently asked questions:

What are the main risks and challenges of data lakes?

The main risks and challenges of data lakes include data quality issues, lack of data governance, security and privacy concerns, and the potential for creating a disorganized data swamp.

How can data quality be maintained in a data lake?

To maintain data quality in a data lake, organizations should implement data validation, cleansing, and transformation processes, as well as establish a robust data catalog to track and manage metadata.

What are the top data lake governance practices?

Top data lake governance practices include defining and enforcing data access policies, maintaining a comprehensive data catalog, establishing data lineage, and implementing audit and compliance mechanisms.

What are data lake security and privacy best practices?

Data lake security and privacy best practices involve access control, encryption (both at rest and in transit), data masking, and monitoring for potential threats, as well as adhering to relevant data protection regulations.

Why is metadata management essential for data lakes?

Metadata management is essential for data lakes to improve discoverability, data lineage, and data quality. It helps users understand the context and origin of data and enables more efficient data processing and analytics.

How can organizations prevent a data swamp scenario?

Organizations can prevent a data swamp scenario by implementing strong data governance practices, maintaining a well-structured data catalog, and routinely auditing and cleaning their data lake to remove outdated or irrelevant information.

What are typical data lake architecture pitfalls to avoid?

Typical data lake architecture pitfalls to avoid include poor data ingestion strategies, inadequate data partitioning and indexing, and lack of data lake optimization for specific workloads or storage formats.

How do you balance scalability with data management in a data lake?

Balancing scalability with data management in a data lake involves optimizing storage and compute resources, implementing data partitioning and indexing strategies, and leveraging technologies like auto-scaling and tiered storage.

How do AI and machine learning improve data lake management?

AI and machine learning can improve data lake management by automating data quality checks, metadata management, and data categorization. They can also help identify patterns and anomalies in the data to assist in decision-making and analytics.

What steps are needed to create a successful data lake strategy?

To create a successful data lake strategy, organizations should define clear business objectives, establish robust data governance practices, choose the right technology stack and architecture, and plan for scalability and performance optimization. Continuous monitoring and evaluation of the data lake implementation are also crucial for long-term success.