Welcome to this 10-step field guide to AI for COOs. This comprehensive guide has been designed with busy, non-data-professional executives in mind like you to demystify the process of integrating AI and machine learning into your organization.

With the roadmap provided here, you’ll learn how to efficiently and economically prepare your organization for AI, from the initial stages of understanding the fundamental principles of AI and ML to successfully implementing, scaling, and evaluating these transformative technologies.

Let’s embark on this transformative journey together.

1. Introduction to AI and Machine Learning

Artificial intelligence (AI) and machine learning (ML) are reshaping the business landscape and opening up new opportunities for efficiency, growth, and innovation.

But before we delve into how to deploy these technologies within your organization, it is important to understand what they are, how they work, and why they matter.

In this section, we’ll start by defining AI and ML and exploring their origins and their different branches. We’ll go beyond the buzzwords to clarify what these terms really mean.

We’ll then discuss how these technologies operate and differentiate AI from traditional programming. We’ll also touch on the various types of ML to give you a broad understanding of these key concepts.

Next, we’ll delve into why AI and ML are so important, showcasing their benefits and potential impact on your organization. We’ll also address some common challenges and risks associated with AI and ML deployment.

Finally, we’ll wrap up with real-world success stories to show how these technologies have been successfully implemented across various industries.

By the end of this section, you will have a solid grasp of AI and ML’s fundamental concepts to prepare you for the subsequent steps in your AI and ML deployment journey.

Defining AI

As an enterprise COO, the definition of AI is something that you should be aware of already. Of course, a small refresher course on AI history wouldn’t hurt.

Artificial intelligence, commonly known as AI, is a branch of computer science that aims to create machines capable of performing tasks that usually require human intelligence. These tasks include understanding natural language, recognizing patterns, solving problems, and making decisions.

The term “artificial intelligence” was first coined by John McCarthy in 1956 at the Dartmouth Conference, which marked the birth of AI as a field of study.

A Brief History of AI

AI has evolved significantly since its inception. In the early years, AI research focused on symbolic methods and problem-solving models. This era saw the development of systems that could mimic human problem-solving skills, albeit in specific domains.

In the 1990s, machine learning came to the fore, harnessing the power of data and statistics to train machines to improve their performance over time. The advent of the internet and the explosion of digital data gave machine learning a significant boost.

More recently, we have seen the rise of deep learning, a subset of machine learning that leverages neural networks with many layers (hence “deep”) to model and understand complex patterns. Enabled by advances in computational power and the availability of large datasets, deep learning has powered many recent breakthroughs in AI.

Branches/Types of AI

AI can be categorized into several branches or types, each with distinct characteristics and applications. For this guide, we’ll focus on two significant types: generative AI and predictive AI.

Generative AI

This branch of AI involves systems that can generate content. They are based on generative models, which can generate new data instances that resemble the training data. These models can create anything, from written text to images, music, and even voice.

A well-known implementation of generative AI is generative adversarial networks (GANs), a type of neural network architecture that pits two networks against each other to generate new, synthetic instances of data.

Applications of generative AI can be seen in diverse fields, from art and design (e.g., creating new graphic designs or artworks) to healthcare (e.g., creating synthetic patient data for research).

Predictive AI

Predictive AI, as the name suggests, is used to predict future outcomes based on historical data. It involves techniques such as machine learning and statistical modeling.

ML models are trained on a dataset and learn to predict outcomes based on patterns and relationships in that data. For example, a predictive AI model could be used to forecast customer behavior, predict disease progression in healthcare, or anticipate market trends in finance.

Each type of AI has its strengths and is suited to different tasks. Generative AI excels in tasks that require creativity and the generation of new content, while predictive AI is ideal for tasks that require making forecasts or predictions about future events. Both are crucial in transforming various aspects of an enterprise and driving innovation.

How AI Works

The exact workings of AI depend largely on the type of AI and the techniques used. However, at a high level, AI works by learning from data. An AI system is fed a large amount of data and uses algorithms to identify patterns in this data. Over time, the system improves its performance based on feedback or additional data.

AI vs. Traditional Programming

The main difference between AI and traditional programming lies in their approaches to problem-solving:

Traditional Programming

Here, a programmer explicitly tells the computer what to do. They define the rules and write the code telling the computer how to process input data to generate the desired output.

AI

In contrast, AI uses data and algorithms to learn how to reach a desired outcome. Instead of being explicitly programmed, an AI system learns from experience (i.e., data) and adjusts its algorithms based on what it learns to improve performance over time.

Understanding AI and its potential can empower your organization to leverage this technology effectively, creating efficiencies, uncovering insights, and driving innovation.

Defining ML

Machine learning is a subset of AI that allows systems to automatically learn and improve from experience without being explicitly programmed. In other words, ML systems can learn from data, identify patterns, and make decisions with minimal human intervention.

Types of Machine Learning

There are primarily three types of machine learning:

Supervised Learning

This is the most common type, where an algorithm learns from labeled data. During training, the model is provided with input–output pairs, and the goal is to learn a mapping from inputs to outputs. Once trained, the model can apply this mapping to new, unseen data.

Examples include regression and classification problems, such as predicting house prices or identifying emails as spam or not spam.

Unsupervised Learning

Here, the algorithm learns from unlabeled data and discovers patterns or structures from the input data. The goal is to understand the underlying structure of the data or reduce its dimensionality. Common examples of unsupervised learning include clustering (grouping similar items) and association rules.

Reinforcement Learning

This type of ML involves an agent that interacts with its environment by producing actions and receiving rewards or penalties in return. The goal is to learn a policy that maximizes the sum of rewards over time. Reinforcement learning is often used in robotics, gaming, and navigation.

How Machine Learning Works

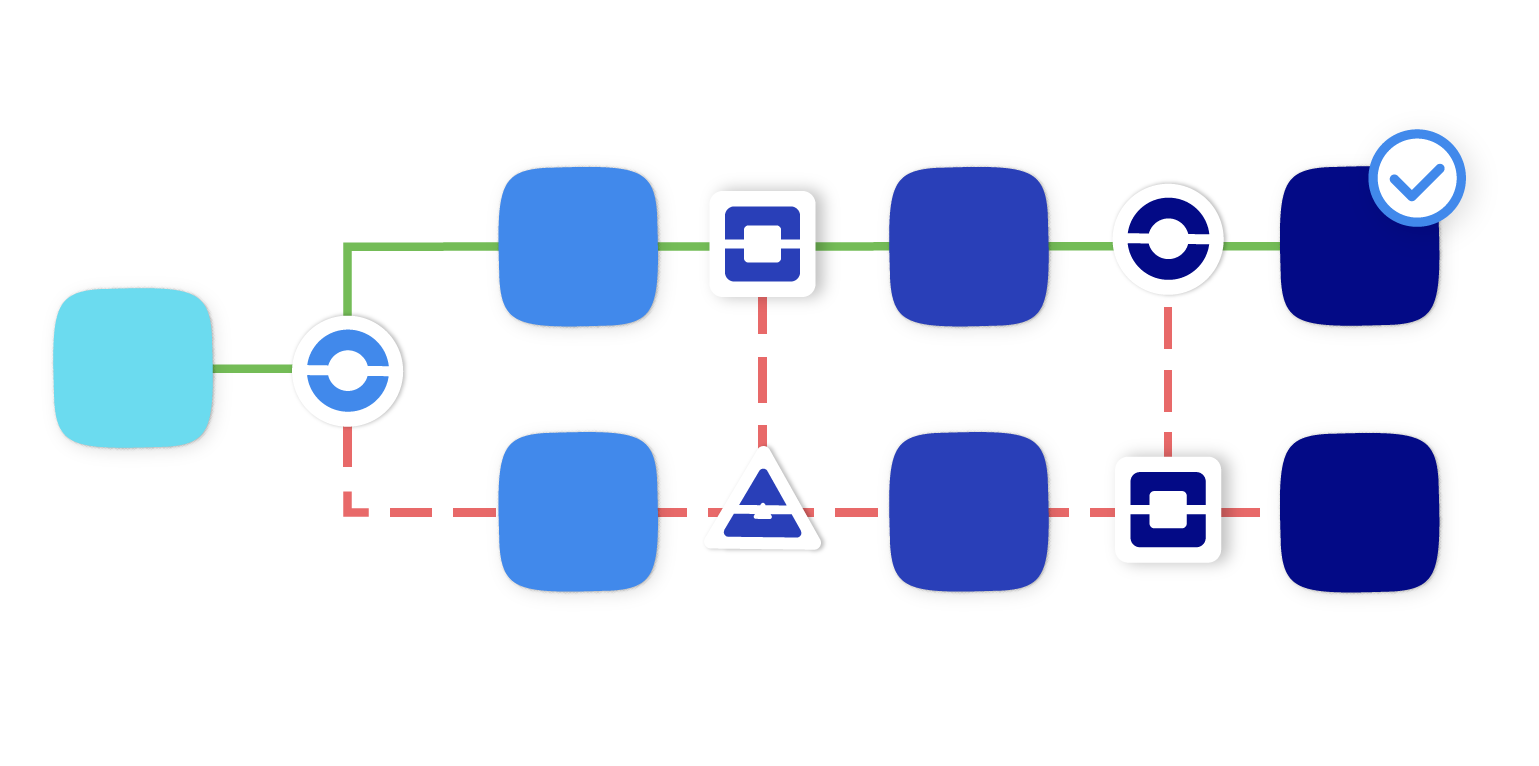

Machine learning operates through a series of stages to successfully learn from data. It begins with data collection, where relevant data is gathered and prepared for training the model. This data forms the basis from which the model will learn.

After collecting the data, the next step involves selecting a suitable ML algorithm that aligns with the problem you are trying to solve. Once a model is chosen, the training process begins.

During training, the collected data is fed into the model, allowing it to learn and identify patterns. Then the model’s performance is evaluated using unseen data to verify if it has learned effectively. This process can reveal areas of improvement, leading to the final stage of machine learning tuning.

In this phase, adjustments are made to the model to enhance its performance based on the insights obtained from the evaluation stage. This iterative process of training, evaluation, and tuning is central to creating a successful ML model.

Popular ML Algorithms

Here are a few popular machine-learning algorithms:

- Linear Regression: A simple but widely used algorithm for predicting a continuous output variable based on one or more input features

- Logistic Regression: Similar to linear regression but used for classification problems where the output is binary (e.g., yes/no, true/false)

- Decision Trees: A type of model that makes decisions based on a tree-like model of decisions. Useful for both regression and classification problems

- Support Vector Machines (SVM): A technique that classifies data by finding the best boundary that separates different categories of data points

- Neural Networks: A framework for many ML algorithms to work together and process complex data inputs

- K-Means Clustering: An unsupervised learning algorithm for grouping data into clusters based on their similarity

Understanding machine learning is the key to unlocking the vast potential of AI. By identifying patterns and learning from data, ML can help organizations make better decisions, predict future trends, and automate tasks.

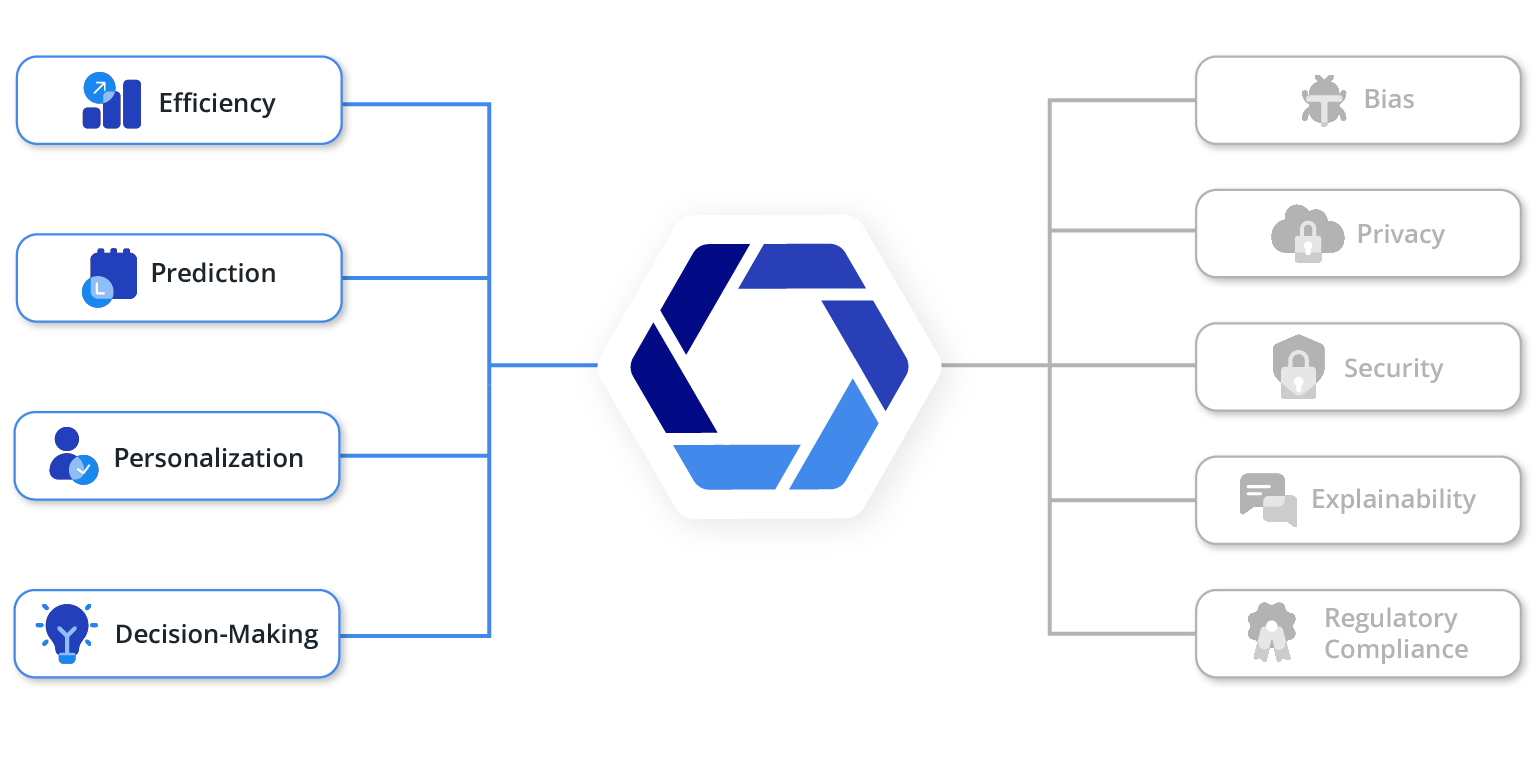

The Importance and Benefits of AI/ML

As we delve into a more data-driven era, the importance and benefits of artificial intelligence and machine learning cannot be overstated. When appropriately applied, these technologies can profoundly impact efficiency, prediction, personalization, and decision-making within an organization.

Efficiency

AI and ML can dramatically improve organizational efficiency by automating routine tasks. By assuming responsibility for time-consuming, repetitive work, they free up employees to focus on higher-value tasks that require human judgment and creativity. This increases productivity and can lead to significant cost savings.

Moreover, AI and ML can often perform these tasks more accurately and consistently than humans, reducing errors and improving quality.

Prediction

One of the greatest strengths of ML is its predictive capabilities. By learning from historical data, ML algorithms can identify patterns and trends not easily discernible to humans.

They can be used to predict future outcomes in various domains, from sales forecasts and customer churn to equipment failures and disease outbreaks. These predictive insights can enable proactive decision-making, which helps organizations mitigate risks and seize opportunities.

Personalization

AI/ML has revolutionized the field of personalization. Today, businesses can use these technologies to understand their customers at an individual level and tailor their offerings accordingly.

This can be seen in the personalized recommendations offered by streaming services, the targeted advertising on social media, and the customized shopping experiences on e-commerce sites. Personalization can enhance customer satisfaction, drive user engagement, and boost sales.

Decision-Making

Perhaps most importantly, AI and ML can augment human decision-making. These technologies can provide decision-makers with timely, data-driven insights by processing and interpreting vast amounts of data quickly.

This allows organizations to make more informed decisions, whether identifying the best candidate for a job, selecting the optimal route for a delivery, or determining the ideal price for a product. Moreover, AI/ML can help identify biases in decision-making and promote more fair and objective outcomes.

Risks and Challenges of Using AI

While AI and ML can deliver substantial benefits, they also have inherent risks and challenges that must be carefully managed. These include bias, privacy, security, explainability, and regulatory compliance.

Bias

AI and ML systems learn from data. If the data they learn from is biased, the decisions they make can be biased too. This can lead to unfair outcomes that disadvantage certain groups.

For instance, a hiring algorithm trained on data from a company that has historically hired men over women might undervalue female candidates. To mitigate this risk, it is crucial to use diverse and representative data for training and regularly test and adjust algorithms for bias.

Privacy

AI and ML often rely on vast amounts of personal data, which raises privacy concerns. How is the data collected? Who has access to it? How is it used and stored?

These are all questions that organizations using AI/ML must be prepared to answer. They must ensure they have robust data governance policies and practices and comply with all relevant privacy laws and regulations.

Security

AI systems can be targets for malicious actors. These actors might try to manipulate the system’s behavior (an attack known as adversarial manipulation), steal the data the system is using, or gain access to the system’s underlying infrastructure.

Therefore, organizations must ensure they have strong security measures, including encryption, access controls, and intrusion detection systems.

Explainability

AI and ML systems, particularly those using complex deep learning models, can be notoriously difficult to interpret. They are often seen as “black boxes” that make decisions without explaining why.

This lack of transparency can make it difficult for humans to trust and effectively manage AI systems. It can also make it challenging to identify and correct issues like bias. Therefore, there is growing interest in explainable AI (XAI)—AI systems that can make their reasoning transparent.

Regulatory Compliance

As AI becomes more pervasive, it is attracting more attention from regulators. Some jurisdictions already have laws and regulations that pertain to AI, particularly around privacy and bias.

Organizations must keep abreast of this evolving legal landscape and ensure their use of AI complies with all relevant rules. This might involve working with legal experts and investing in compliance tools and processes.

Real-World AI/ML Success Stories

Artificial intelligence and machine learning have found applications across a wide variety of industries, revolutionizing traditional practices and delivering notable success. Here are a few examples that demonstrate the potential of these technologies:

Healthcare: Google’s DeepMind

DeepMind, owned by Google, developed an AI system for diagnosing eye diseases. The system uses deep learning to analyze 3D scans of patients’ eyes and detect more than 50 eye conditions with 94% accuracy. This tool helps doctors make quicker and more accurate diagnoses, enhancing patient outcomes.

Retail: Amazon

Amazon has been a trailblazer in AI/ML applications in retail. It uses AI for product recommendations, fraud detection, warehouse automation, and even predicting what products will be popular in the future. Its recommendation engine, powered by ML, personalizes the shopping experience for each customer, driving sales and improving customer satisfaction.

Finance: American Express

American Express uses ML to detect and prevent credit card fraud. The company processes billions of transactions, and ML algorithms help identify patterns and flag potential fraudulent activities. This AI-driven approach has significantly reduced fraud losses and improved customer trust.

Manufacturing: General Electric (GE)

GE uses AI and ML for predictive maintenance in its manufacturing facilities. Sensors placed on equipment feed data to ML models that predict when a machine is likely to fail. This allows the company to perform maintenance before a failure occurs, reducing downtime and saving money.

Transportation: Uber

Uber uses AI for various purposes, from estimating arrival times to setting prices and matching drivers with riders. One notable use of AI is in its development of self-driving cars. Its AI algorithms process data from sensors on the vehicle to navigate roads, avoid obstacles, and obey traffic laws.

Agriculture: Blue River Technology

Blue River Technology, acquired by John Deere, developed a machine called “See & Spray” that uses computer vision and ML to recognize and spray weeds on cotton plants. This precision farming significantly reduces the chemicals used, saving costs and reducing environmental impact.

These examples illustrate the potential of AI and ML to drive efficiency, improve decision-making, enhance customer experiences, and even create new business models. They are a testament to the transformative power of AI and ML across various industries.

Key Concepts: Introduction to AI/ML

- AI/ML Concepts: Learn the basics and differences from traditional programming.

- AI/ML Significance: Understand its benefits, impacts,

and associated risks. - Real-world Examples: Examine successful AI/ML implementations.

2. Understanding Data and Its Role in AI/ML

In the world of AI/ML, data serves as the fuel that powers these innovative systems. A robust and effective AI/ML strategy hinges on the quality, volume, and relevance of the data at hand.

In this section, we’ll unpack why data is frequently referred to as the “new oil” and scrutinize its pivotal position in driving intelligent systems. We’ll delve into the mechanisms of data collection, touching on its diverse sources, methods, and legal aspects.

Moving forward, we’ll shed light on data management, and understanding how data is stored, organized, and secured. The significance and procedures of data cleaning and preprocessing will also be explored as they contribute to the effectiveness of ML models.

Lastly, we’ll focus on data quality, unraveling the characteristics of “good” data and discussing the repercussions of poor-quality data.

By grasping these concepts, you will be poised to effectively harness the power of data in your AI/ML initiatives.

The Importance of Data: The New Oil in AI/ML

In the rapidly evolving digital landscape, data is often called the “new oil” because it powers AI and ML technologies. This metaphor underscores its increasing value, likening it to the pivotal role oil played during the Industrial Revolution.

Just as oil was a raw commodity that, when refined, drove significant industrial and economic growth, so does data hold potential in its refined form. Raw data, when processed and analyzed, can unlock valuable insights, propel innovation, and form the backbone of modern business operations.

The proliferation of digital technologies and the Internet of Things (IoT) has led to an unprecedented generation of data. When harnessed effectively, this data can provide profound insights and offer a competitive edge.

Within the realm of AI/ML, the importance of data is paramount. These technologies depend heavily on abundant, high-quality data to learn, make decisions, and refine their functionality over time.

ML algorithms, for example, learn by recognizing patterns in data. The larger and more diverse the data set, the more accurate and reliable the ML model becomes.

Furthermore, the iterative learning process integral to AI and ML models allows them to continually improve and adapt to changing conditions as they gain more experience and exposure to data. They use this data to make informed decisions in various contexts, whether recommending a product, diagnosing a disease, or identifying fraudulent transactions.

Additionally, historical data empowers AI/ML models to predict future outcomes, a capability invaluable in several sectors. From forecasting sales to predicting equipment failure or disease outbreaks, this predictive capacity can transform organizational decision-making and strategy.

Thus, data is the fuel that powers artificial intelligence/machine learning technologies. As a car requires fuel to run, so do AI/ML systems need data to function effectively.

Any organization aspiring to harness the power of AI/ML must prioritize collecting, managing, and ethically analyzing data. By recognizing and leveraging the pivotal role of data in AI/ML, enterprises can fully tap into the transformative potential of these technologies.

Data Collection: Sources, Methods, and Legal Considerations

Data collection is a fundamental step in the AI/ML process. Collecting high-quality, relevant data from the right sources can significantly enhance the effectiveness of AI/ML models.

Here, we discuss the different sources of data, methods for collection, and legal considerations surrounding this process.

Sources of Data

Data can come from various sources, depending on the industry and the specific AI/ML use case. These sources include:

Internal Data

This can be generated through your organization’s daily operations, such as transaction records, customer data, and data from company databases.

Internet Data

This includes social media feeds, website analytics, online reviews, and data from web scraping.

Third-Party Data

Commercial data brokers and public data sets can provide additional insights.

IoT Devices

These can generate vast amounts of real-time data from sensors and smart devices.

Surveys and Interviews

These can provide valuable data in research-driven fields.

Methods for Collection

The methods for data collection can vary significantly based on the source of the data:

Data Extraction Tools

These are software tools designed to extract data from different sources, such as databases, websites, or software applications.

APIs

Application programming interfaces (APIs) provide a means to extract data from online services and databases.

Web Scraping

This method involves extracting data directly from websites.

IoT Devices

Data can be directly streamed from sensors and other connected devices.

Direct Input

In some cases, data may be collected through direct user input, such as through forms or surveys.

Legal Considerations

Data collection isn’t without legal considerations. Privacy laws, such as the General Data Protection Regulation (GDPR) in Europe and the California Consumer Privacy Act (CCPA) in the United States, place strict limitations on what data can be collected and how it can be used.

Here are some key considerations:

Consent

Most privacy laws require organizations to obtain consent from individuals before collecting their personal data.

Purpose Limitation

Personal data should be collected only for specified, explicit, and legitimate purposes.

Data Minimization

Only the data required for the intended purpose should be collected.

Transparency

Individuals should be informed about what data is being collected, why it is being collected, and how it will be used.

Security

Organizations must ensure appropriate security measures are in place to protect the collected data.

Effective data collection is critical to successful AI/ML deployment. However, it is important to focus not only on the quantity of data but also on the quality and relevance. Furthermore, legal considerations around data privacy should always be considered to ensure compliance and maintain trust.

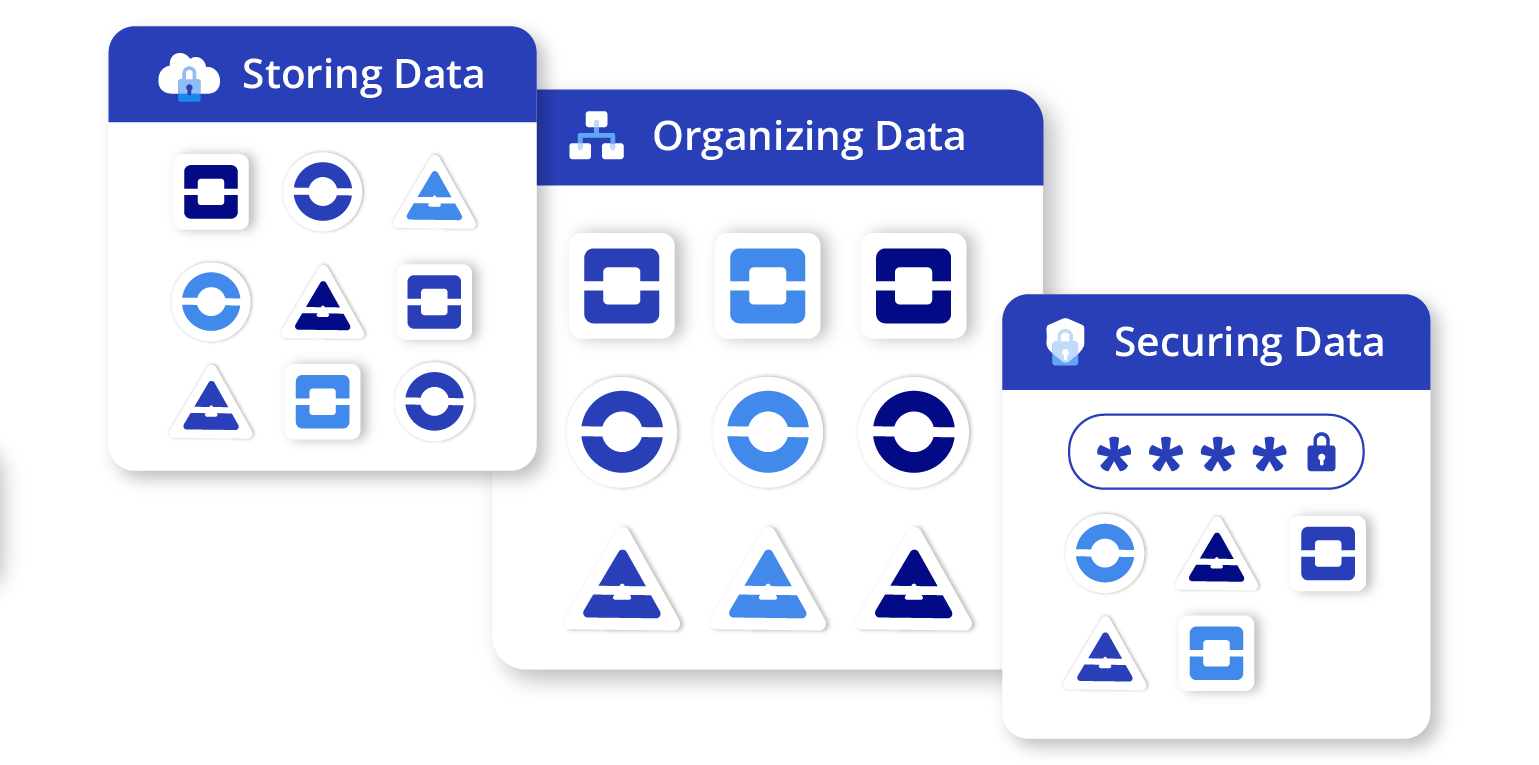

Data Management: Storing, Organizing, and Securing Data

Effective data management is another vital part of the AI/ML life cycle. It involves storing, organizing, and securing data to ensure accessibility, reliability, and relevance for AI/ML applications. Let’s delve into each aspect to better understand its importance.

Storing Data

Data storage involves choosing a suitable location and format to keep your data. Depending on the volume of data, the frequency of access, and the security requirements, the storage options vary:

On-Premises Storage

This involves storing data on local servers within your organization’s physical premises. It offers high security and control but may require substantial infrastructure investment and maintenance.

Cloud Storage

Cloud-based solutions like AWS, Google Cloud, or Azure provide scalable and flexible data storage options. These services manage infrastructure, so your organization can focus on deriving value from the data rather than its upkeep.

Hybrid Storage

A combination of on-premises and cloud storage, hybrid storage offers a balance between control and scalability.

Organizing Data

Proper data organization is critical to ensuring its usability for AI/ML purposes. It involves structuring and cataloging data to enable easy retrieval and analysis:

Data Architecture

This involves defining how data is collected, stored, processed, and accessed across the organization. A well-planned data architecture enables efficient data flow and easy integration of AI/ML systems.

Data Catalog

A data catalog provides metadata about data stored across the organization. It is a central reference point for understanding what data is available, where it is stored, and its relevance.

Data Governance

This refers to the overall management of data availability, usability, integrity, and security in an enterprise. It involves defining policies and procedures for data handling to ensure consistency and compliance.

Securing Data

Securing data involves implementing measures to protect data from unauthorized access, corruption, or theft:

Data Encryption

This involves encoding data to protect it from unauthorized access. Both data at rest (stored data) and data in transit (data being transmitted) should be encrypted.

Access Control

Implementing stringent access controls ensures that only authorized individuals can access specific data.

Backup and Recovery

Regular backups protect against data loss, while recovery strategies ensure that data can be restored in case of a disaster.

Compliance

Organizations must comply with relevant data protection regulations, which involve strict rules about data collection, storage, and processing.

Effective data management not only ensures that data is easily accessible for AI/ML purposes but also ensures that it is protected and compliant with relevant regulations. A carefully planned and executed data management strategy is vital to unlocking the full potential of AI/ML in an enterprise.

The Necessity of Data Cleaning and Preprocessing

Once data is collected and stored, it is not immediately ready for use in ML models. The data must first go through cleaning and preprocessing to ensure it is structured properly, remove any errors or inaccuracies, and transform it into a format suitable for AI/ML algorithms. This step is crucial as data quality directly impacts the performance of AI/ML models.

If you’re not familiar with data preprocessing, it’s when data is transformed into a format that can be understood and used by machine learning models.

If the data is inaccurate, inconsistent, or contains errors, the model’s performance can be significantly compromised. Here’s why these processes are necessary:

Reducing Noise

Cleaning reduces noise in the data—the random variance or inconsistencies that can distort the performance of an AI/ML model.

Handling Missing Values

Datasets often have missing values, which can lead to bias or inaccuracies in the model. Cleaning helps identify and address these missing values.

Ensuring Consistency

Data often comes from multiple sources, leading to inconsistencies. Cleaning ensures the data is uniform and consistent.

Improving Accuracy

Clean, well-structured data improves the accuracy of AI/ML models, leading to more reliable results and predictions.

Techniques for Data Cleaning

There are several techniques for data cleaning, depending on the type of data and the specific issues it may have:

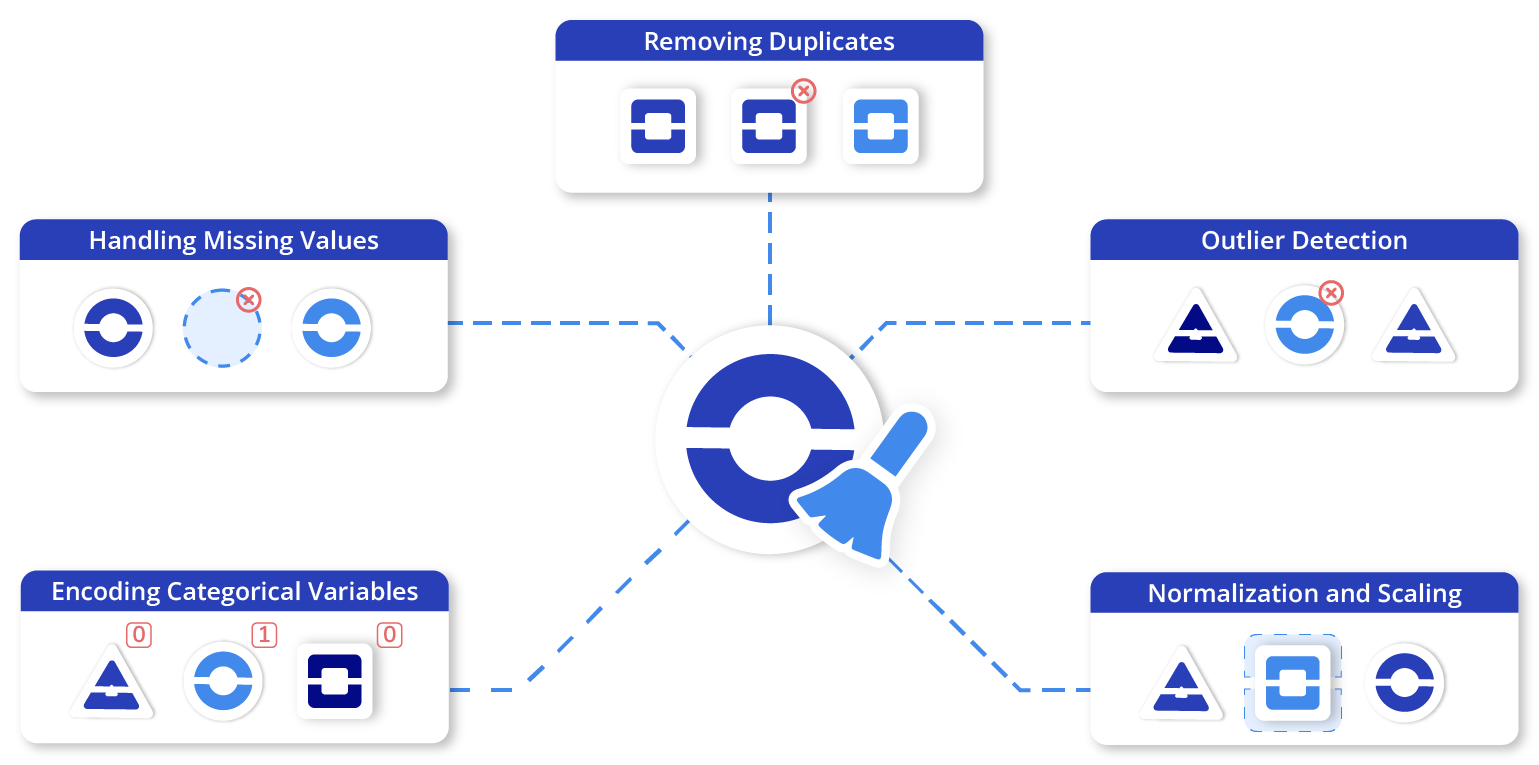

Removing Duplicates

Duplicate values can skew the data and lead to biased models. Tools and functions are available in most programming languages to identify and remove these duplicates.

Handling Missing Values

Depending on the nature and volume of missing data, different strategies can be applied. These include removing rows with missing data, filling missing values with a calculated statistic (like mean or median), or using ML techniques to predict and fill in the missing values.

Outlier Detection

Outliers can significantly distort predictions. Statistical methods can be used to detect and handle outliers, such as the Z-score or IQR method.

Normalization and Scaling

These techniques transform numeric data to a common scale without distorting the differences in ranges of values. This is particularly important for certain algorithms sensitive to the scale of the input features.

Encoding Categorical Variables

Many ML models require inputs to be numerical. Encoding transforms categorical data into a numerical format. Techniques include one-hot encoding, ordinal encoding, or binary encoding.

Data cleaning and preprocessing are vital steps in preparing your data for AI/ML. By investing time in these processes, you can significantly enhance the performance of your AI/ML models and ensure that they produce reliable, accurate results.

The Crucial Role of Data Quality

Data quality is a critical factor in the success of any AI/ML initiative. The characteristics of “good” data ensure it can effectively feed ML algorithms and drive useful predictions. Conversely, poor-quality data can have severe impacts, including skewing results, leading to incorrect decisions and potentially significant financial costs.

Characteristics of Good Data

Good-quality data generally possess the following characteristics:

Accuracy

Accurate data correctly represents the real-world entities or events it is supposed to describe. Inaccuracies can arise from faulty data collection instruments or human error.

Completeness

Complete data has no missing values or gaps. Incomplete data can lead to biased or incorrect results from an AI/ML model.

Consistency

Consistent data is free from contradictions and matches across all instances. Discrepancies can arise when data is collected from different sources or at different times without proper synchronization.

Timeliness

Timely data is available when it is needed. Old data may not reflect the current situation and could lead to flawed insights.

Relevance

Relevant data has a meaningful connection to the question at hand. Irrelevant data, even if accurate and timely, may not contribute to meaningful insights and could unnecessarily complicate the model.

Granularity

Granular data is detailed enough for the task at hand but not excessively so. Overly detailed data can cause problems with processing and privacy, while data that is too coarse may not capture necessary insights.

Impact of Poor-Quality Data

The ramifications of poor-quality data are manifold and significantly detrimental.

For starters, AI/ML models trained on subpar data can yield inaccurate or biased predictions, which, in turn, lead to incorrect decisions and compromised business outcomes. Such inaccuracies can also erode trust in the AI/ML model’s outputs among end users and stakeholders, undermining the credibility of the entire AI initiative.

Furthermore, the additional time and resources required to rectify mistakes arising from poor-quality data can escalate operational costs substantially. In a worst-case scenario, the cost burden isn’t just financial; decisions rooted in poor-quality data can attract regulatory penalties in certain industries, resulting in legal complications and reputational damage.

Therefore, maintaining high data quality isn’t optional—it’s a crucial precondition for successful AI/ML deployment. Prioritizing data quality enhances the performance of AI/ML models, bolsters trust in their outputs, curbs unnecessary expenses, and reduces regulatory risk. Ultimately, the importance of data quality to the success of any AI/ML initiative cannot be overstated.

Key Concepts: Understanding Data & Its Role in AI/ML

- Role of Data: Recognize data as the cornerstone of AI/ML initiatives.

- Data Quality: Understand the significance of “good” data and the risks of poor-quality data.

- Data Management: Grasp the importance of proficient data collection and secure data handling.

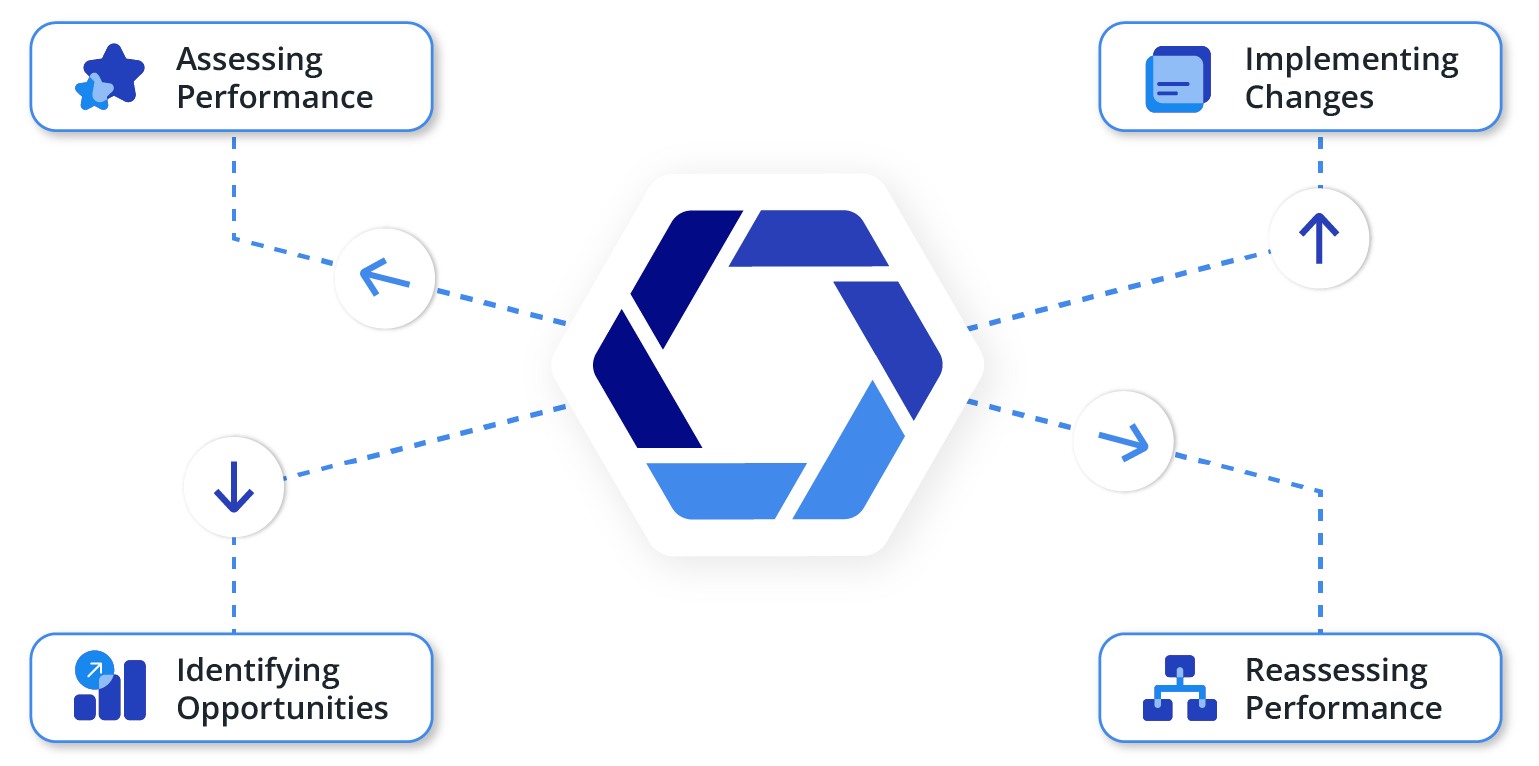

3. Identifying AI Opportunities

As your enterprise embarks on its AI journey, it is essential to identify areas where AI and ML can bring tangible benefits. From streamlining operations and improving customer experience to predicting market trends and enhancing decision-making, AI can unlock several opportunities.

However, pinpointing these opportunities requires understanding your organization’s specific needs and a strategic approach. This section delves into how to identify AI opportunities, analyze business processes, and select suitable AI projects that align with your enterprise’s goals.

Problem Identification

As with any business initiative, the first step in implementing AI and ML is identifying the problems you are trying to solve. It requires understanding your organization’s operational pain points, inefficiencies, or areas of potential improvement.

Here are some guidelines to help you identify problems suitable for AI/ML solutions:

Data-Intensive Processes

AI/ML is particularly effective at handling data-intensive tasks. If there are areas in your organization where employees are overwhelmed with data processing, AI/ML could be a solution. This could include anything, from analyzing customer feedback to processing invoices.

Decision-Making Processes

AI/ML algorithms can identify patterns and make predictions based on large amounts of data. If you have processes that require making decisions based on complex data, AI/ML can assist or automate this decision-making.

Repetitive and Manual Tasks

AI/ML, especially when combined with automation technologies, can take over repetitive, manual tasks, freeing up employees’ time for more strategic work. If your organization spends a significant amount of time on manual data entry, for instance, this could be an opportunity for AI/ML.

Prediction and Forecasting

AI/ML excels at forecasting based on historical data. If there are areas in your business where prediction is critical, such as demand forecasting in supply chain management, AI/ML could be particularly beneficial.

Customer Interactions

AI/ML technologies, like chatbots and recommendation systems, can enhance customer experience. If you are looking to improve customer engagement, personalization, or service, AI/ML can offer solutions.

Remember that not all problems are suitable for AI/ML solutions. Focus on areas where artificial intelligence and machine learning can genuinely add value, considering the availability of data, cost of implementation, and potential return on investment.

Process Automation

Process automation, often facilitated by AI and ML, is crucial in improving efficiency, reducing errors, and freeing up human resources for higher-value tasks.

Suitable tasks for automation are typically repetitive and rule-based and involve structured data. For instance, data entry, invoice processing, or regular customer communication might be prime candidates for automation.

The benefits of automating these tasks are numerous. Beyond improving operational efficiency and accuracy, automation can lead to cost savings, increased productivity, and greater capacity to scale operations.

It also allows employees to redirect their efforts toward more strategic, creative, and decision-intensive tasks, leading to higher job satisfaction and potentially better business outcomes.

However, automation is not without its challenges. These challenges range from technical hurdles, such as integrating automation solutions with existing systems, to workforce-related issues, such as training employees to work with automated processes and managing changes in job roles.

Additionally, automation can require a significant upfront investment, although the longer-term benefits usually offset this.

Furthermore, not all processes are suitable for automation, particularly those that require human judgment, creativity, or complex decision-making.

Therefore, a careful analysis is needed to identify tasks ripe for automation and to balance the potential benefits against the costs and challenges. The goal should always be to use automation to enhance human work, not to replace it completely.

Revenue Generation

As enterprises continue to delve deeper into the world of artificial intelligence and machine learning, opportunities for new revenue generation begin to emerge. AI/ML technologies, with their capacity to draw insights from data, predict outcomes, and automate processes, can be leveraged to create new products or services that could serve as significant revenue streams.

One way AI/ML can contribute to revenue generation is by creating personalized products or experiences. For instance, recommendation engines that suggest products based on a customer’s browsing history or purchase behavior can increase sales.

Similarly, AI-powered chatbots or virtual assistants can provide personalized customer service at scale, potentially improving customer satisfaction and increasing customer loyalty.

AI/ML can also unlock monetization opportunities in the data itself. Many companies sit on a wealth of untapped data that could be analyzed and packaged into insightful reports or analytics services for other businesses. These data products could be a significant source of revenue.

Additionally, the predictive capabilities of AI/ML can be instrumental in optimizing pricing strategies. Dynamic pricing models, powered by ML algorithms, can adjust prices in real-time based on demand, competition, and customer behavior, helping maximize profitability.

However, generating revenue from AI/ML requires careful strategy and execution. It is essential to ensure that any new product or service aligns with the company’s overall business strategy and brand. It is also important to understand the legal and ethical considerations around using data, especially regarding customer privacy.

Artificial Intelligence in Decision-Making

AI and ML have a transformative impact on decision-making processes within an enterprise. They equip organizations with the capability to sift through vast amounts of data and unearth valuable insights, enabling leaders to make more informed, strategic decisions.

The decision-making prowess of AI and ML stems from their ability to identify patterns and trends within data that might elude human analysis due to sheer volume or complexity. This ability facilitates predictive analytics, where historical data is used to forecast future trends, consumer behavior, or market changes.

These predictive capabilities can be especially crucial in strategic areas such as financial planning, supply chain management, or marketing strategy, providing foresight and allowing for proactive decision-making.

AI and ML can also support decision-making through prescriptive analytics, which goes beyond prediction to recommend optimal courses of action based on the analyzed data.

For example, an AI system might suggest the best marketing channels to invest in based on past campaign performance and future predictions or recommend adjustments to production levels to meet forecasted demand while minimizing costs.

Moreover, AI’s ability to process and analyze data in real-time allows for dynamic decision-making. It enables businesses to react swiftly to changing circumstances and make decisions based on current data.

However, while AI can greatly enhance decision-making, it is vital to maintain a human element in the process. AI should serve only as a tool to inform and support human decision-makers rather than replace them. Humans must provide context, exercise judgment, and consider ethical factors.

By incorporating AI and ML into decision-making processes, businesses can achieve higher accuracy, foresight, and agility in their strategic decisions, improving business outcomes.

Key Concepts: Identifying AI Opportunities

- Identify Areas for AI Application: Determine areas where AI/ML can bring tangible benefits to your organization.

- Understand Organizational Needs: Gain an understanding of your organization’s specific needs.

- Select Suitable AI Projects: Choose AI/ML projects that align well with your enterprise’s goals.

4. Building the Right Team

Transitioning toward artificial intelligence and machine learning-driven operations necessitates assembling a competent team that can handle the technical and strategic aspects of this transformation. This team plays a critical role in implementing AI and ML solutions and ensuring these solutions are effectively integrated into the wider business strategy.

Building the right team for AI and ML goes beyond hiring data scientists. It involves bringing together a diverse group of professionals who can collectively address the challenges this transition presents.

In this section, we’ll explore the key roles required in an AI team, the skills to look for, and the significance of fostering a culture of collaboration and continuous learning.

Key Roles

When building an AI and ML team, understanding the distinct roles and their responsibilities is paramount. Here are some key roles that typically constitute an AI/ML team:

Data Scientists

Data scientists are primarily responsible for designing and implementing models that can uncover insights from vast amounts of data.

They use statistical techniques, machine learning algorithms, and predictive models to analyze and interpret complex data sets. They also play a crucial role in communicating the insights and predictions gleaned from these models to stakeholders.

Data Engineers

Data engineers create and manage the infrastructure required to process and store large volumes of data.

They handle data ingestion, transformation, and cleaning and ensure that data is accessible, reliable, and efficiently handled for use in AI/ML projects. They work closely with data scientists to ensure that the data needs for different projects are met.

Machine Learning Engineers

Machine learning engineers are specialized software engineers with a strong understanding of ML algorithms.

They take the models built by data scientists and turn them into scalable, production-ready systems. Their tasks often involve writing code, developing APIs, and integrating models with existing software systems.

AI/ML Project Managers

AI/ML project managers oversee the entire project and ensure it aligns with the company’s strategic goals and progresses on schedule and within budget. They coordinate between different team members and stakeholders, handle resource allocation, and address any issues or roadblocks during the project.

These roles are all critical for a successful AI/ML project, and they must work closely together. Data scientists, data engineers, and machine learning engineers collaborate to design, build, and deploy models, while the project manager ensures that all these activities align with the project’s overall goals.

Building a well-rounded team with these key roles can significantly enhance the success of your AI/ML initiatives.

Hiring vs. Training

As organizations consider building their AI/ML team, one critical decision is whether to hire new talent or invest in training existing staff. Both options have their advantages and challenges.

Hiring Pros:

- Immediate expertise: Hiring new employees with AI/ML experience brings immediate expertise to accelerate your initiatives.

- Fresh perspectives: New hires often bring in fresh ideas and perspectives that can invigorate projects.

- No operational disruption: Hiring new talent means existing staff can continue their roles without disruption.

Hiring Cons:

- High costs: Hiring specialized AI/ML professionals can be expensive, given the high demand for these skills.

- Longer process: The hiring process can be time-consuming, especially when searching for highly specialized skills.

- Cultural fit: New hires need to blend in with the company’s culture, which might take time.

Training Pros:

- Leverages existing knowledge: Existing employees already understand the company, its culture, and its needs. Training them in AI/ML leverages this knowledge and may lead to more aligned and effective AI/ML initiatives.

- Boosts morale: Offering training to existing staff can boost morale and job satisfaction, showing that the company is willing to invest in their career development.

- Cost-effective: In many cases, training can be less expensive than hiring new employees, especially when considering recruitment and onboarding costs.

Training Cons:

- Takes time: Training existing employees takes time, and it may delay the launch of AI/ML projects.

- Operational disruption: Employees may need to balance training with their current roles, which could lead to short-term operational disruption.

- Skill limitations: Not all employees may have the aptitude or interest to learn complex AI/ML skills.

In reality, most organizations will likely need a combination of both strategies—hiring new talent for certain roles while providing training for existing staff to fill others. This approach ensures fresh insights and continuity while demonstrating a commitment to staff development.

External Partnerships

Even with a strong internal AI/ML team, there may be situations where it makes sense to consider external partnerships.

Consulting firms, freelancers, and outsourcing companies can provide specialized expertise, help manage resource constraints, or offer flexible staffing options. Here are some scenarios where external partnerships can be beneficial:

Specialized Expertise

AI/ML is a broad field with various specializations. If your project requires a specific skill set that your internal team lacks, such as expertise in a certain type of ML algorithm or experience with a specific industry application, bringing in a consultant or freelancer with that specific knowledge can be advantageous.

Resource Management

If your internal team is stretched thin or you have a large project that requires additional manpower, partnering with an external firm can help manage the workload. Outsourcing companies can provide a scalable workforce that can ramp up or down as needed, allowing for greater flexibility in managing resources.

Project-Based Work

For one-off projects or short-term needs, hiring a freelancer or consulting firm can be more cost-effective than hiring a full-time employee. Once the project is complete, the engagement with the external partner can end without layoffs or restructuring.

Risk Mitigation

Engaging an experienced consulting firm or freelancer can also help mitigate risk, especially for complex or high-stakes projects. These external experts can help avoid common pitfalls, ensure best practices are followed, and provide objective advice and oversight.

However, when considering external partnerships, it is also important to account for potential challenges such as communication difficulties, confidentiality concerns, and control over the work process. It is important to establish clear expectations, protect sensitive data, and maintain effective communication and oversight throughout the engagement.

Organizational Structure

The structure of your data and AI team can significantly impact the success of your AI and ML initiatives. Two common structures are centralized and decentralized teams, each with its unique advantages and challenges.

Centralized Teams

In a centralized structure, all data and AI professionals are grouped in a single team or department, usually under a chief data officer or a similar role. This team serves the entire organization and works on projects across different departments.

Pros

- Cohesion: Centralized teams can foster better coordination and consistency in AI/ML strategies and standards across the organization.

- Efficient use of resources: Centralizing can prevent duplication of effort and ensure the optimal utilization of resources.

- Skill development: Centralized teams can provide better opportunities for team members to learn from each other and develop their skills.

Cons

- Potential bottlenecks: Centralized teams may become overloaded with requests from different departments, leading to potential bottlenecks and delays.

- Misalignment with business needs: Being detached from specific departments may lead to a disconnect between the AI/ML team and the unique needs of different parts of the organization.

Decentralized Teams

In a decentralized structure, data and AI professionals are embedded within various departments or business units. They work closely with the specific department they are a part of and focus on its unique needs.

Pros

- Alignment with business needs: Being embedded within departments ensures that the AI/ML initiatives align closely with the specific needs and goals of each department.

- Faster decision-making: Decentralized teams can make decisions and implement changes quickly without navigating through a centralized hierarchy.

Cons

- Potential for inconsistency: Without central oversight, different teams might adopt different approaches, leading to inconsistencies in AI/ML strategies and standards across the organization.

- Resource constraints: Smaller, decentralized teams may not have the same access to resources as a larger, centralized team.

Ultimately, the choice between a centralized and decentralized structure depends on various factors, such as the size of your organization, the nature of your projects, and your overall business strategy.

It is also possible to adopt a hybrid approach, with a centralized team setting the overall strategy and standards while a decentralized team focuses on department-specific initiatives. This combines the strengths of both models, ensuring consistency and alignment with business needs.

Key Concepts: Building the Right Team

- Skilled Team: Form a group competent in both technical and strategic facets of AI/ML.

- Role Diversity: Ensure a blend of professionals from various fields, not just data scientists.

- Collaborative Learning: Promote a culture that encourages teamwork and continuous learning.

5. Choosing the Right Tools

Embarking on your AI/ML journey necessitates selecting appropriate tools to handle your data and effectively operationalize your AI/ML models. These tools form the backbone of your AI infrastructure and are crucial to the successful execution of your AI/ML initiatives.

This section delves into the essential tools for various stages of your AI/ML pipeline, including data collection and storage, data analysis and preprocessing, model development, deployment, and monitoring.

The goal is to guide you in identifying the tools that best align with your specific needs and goals while offering scalability, security, and ease of use. From open-source libraries to comprehensive AI platforms, we’ll explore a range of options to help you make an informed choice.

Hardware and Software

Artificial intelligence/machine learning workloads often demand significant computational resources. Developing and running ML models, especially those involving large datasets or complex computations, requires robust hardware and the right software tools.

Hardware

The hardware serves as the physical foundation of your AI/ML infrastructure.

CPUs

Central processing units (CPUs) are the standard processing units in most computers. They are versatile and can handle a wide range of tasks. For smaller data sets and less computationally-intensive algorithms, CPUs can be sufficient.

GPUs

Graphics processing units (GPUs), traditionally used for rendering video and graphics, have proven extremely effective for the parallelized computing needs of machine learning, particularly for tasks like training deep learning models.

TPUs

Tensor processing units (TPUs) are Google’s custom-developed application-specific integrated circuits (ASICs) used to accelerate machine learning workloads. They are specifically designed for TensorFlow, Google’s own ML framework, and are available through Google Cloud.

Cloud vs. On-Premises

Depending on your organization’s scale and needs, you may opt for on-premises hardware or leverage cloud-based solutions. Cloud platforms like Amazon Web Services (AWS), Microsoft Azure, and Google Cloud Platform (GCP) offer robust, scalable, and flexible computing resources for AI/ML workloads.

Software

Software tools, platforms, and libraries form the working environment for your AI/ML projects.

Programming Languages

Python is the most popular language for AI/ML because of its simplicity and the availability of several scientific and ML libraries (like NumPy, Pandas, and Scikit-learn). Other languages like R, Java, and Julia are also used in certain contexts.

Frameworks and Libraries

Libraries like TensorFlow, PyTorch, and Keras simplify developing and training ML models. They offer pre-defined functions, algorithms, and models that can be used to design, train, and validate models.

Data Processing and Visualization Tools

Software tools like Apache Spark and Hadoop can process large datasets efficiently. Visualization tools like Matplotlib, Seaborn, and Tableau help understand the data and present the results.

Model Deployment and Monitoring Tools

Once models are developed, they need to be deployed in production environments. Tools like Docker, Kubernetes, and TensorFlow Serving can help in deployment and scaling. Tools like TensorBoard, MLflow, and Prometheus are commonly used for monitoring model performance.

Therefore, choosing the right hardware and software tools is key to setting up a successful AI/ML environment. The choices will depend on several factors, including your specific use cases, the scale of your operations, the expertise of your team, and your budget.

On-Premises vs. Cloud: Pros and Cons

In the context of artificial intelligence and machine learning, deciding between on-premises and cloud infrastructure is a strategic decision that can significantly impact the efficiency, cost, and scalability of your projects. Each option has its set of advantages and potential challenges.

On-Premises Infrastructure

On-premises infrastructure involves hosting and maintaining your hardware and software resources in your own facilities.

Pros

- Control: You have complete control over your infrastructure, data, and security protocols.

- Customizability: You can tailor the hardware and software configuration to meet your exact needs.

- Data Security: You maintain complete custody of your data, reducing potential external risks.

Cons

- Upfront Costs: High initial investment is required for purchasing and setting up hardware and software.

- Maintenance: Ongoing responsibility for maintaining and updating hardware and software can be resource-intensive.

- Scalability: Scaling up involves additional procurement and setup, making rapid scalability challenging.

Cloud Infrastructure

Cloud infrastructure involves using the hardware and software resources provided by a cloud service provider. These resources are accessed over the internet and are scalable on demand.

Pros

- Scalability: Cloud resources can be easily scaled up or down based on demand, providing flexibility and efficiency.

- Cost-Effective: The pay-as-you-go model reduces upfront costs, and you only pay for what you use.

- Focus on Core Activities: Offloading infrastructure maintenance allows your team to focus more on core business activities and less on maintaining infrastructure.

Cons

- Potential Security Concerns: Data stored in the cloud can be vulnerable to breaches, requiring robust security measures.

- Dependence on Service Provider: There may be limitations depending on the offerings of your service provider. Also, network connectivity issues can disrupt access to cloud resources.

- Data Governance: Depending on the jurisdiction, storing data in the cloud can have implications for regulatory compliance and data sovereignty.

The choice between on-premises and cloud infrastructure depends on various factors, including your organization’s specific needs, budget, scale, and data security requirements. A hybrid model, combining on-premises and cloud solutions, can also be an effective approach, providing a balance between control and flexibility.

AI Platforms and Tools: Overview of Popular Options

The AI landscape is abundant with diverse platforms and tools that cater to different stages of the AI/ML lifecycle, from data preprocessing to model development, training, deployment, and monitoring. These platforms and tools can greatly simplify and accelerate your AI/ML initiatives.

Here are some popular options:

Google Cloud AI Platform

This integrated platform provides a suite of tools for building, deploying, and managing ML models.

It provides seamless integration with TensorFlow, support for other major frameworks, and robust data-handling capabilities. It also offers services like AutoML for automating model building and TPUs for faster computations.

Amazon SageMaker

Amazon SageMaker is a fully managed service that enables developers and data scientists to quickly build, train, and deploy ML models.

It includes tools for every step of the ML process and integrates well with other AWS services. SageMaker offers capabilities such as built-in algorithms, support for diverse ML frameworks, and options for distributed model training.

Microsoft Azure Machine Learning

Azure ML is a cloud-based platform for building, training, and deploying ML models.

It provides an interactive workspace to write, run, and test code and manage resources. It offers automated ML capabilities, support for open-source tools, and scalability across Azure’s global infrastructure.

IBM Watson Studio

Watson Studio provides tools for data scientists, application developers, and subject matter experts to collaboratively and easily work with data and use that data to build, train, and deploy models at scale.

It supports a range of open-source tools and frameworks and integrates with IBM’s AI-powered products.

Open-Source Libraries

Beyond comprehensive platforms, open-source libraries such as TensorFlow, PyTorch, Scikit-learn, Keras, and Pandas offer powerful tools for data analysis, model development, and machine learning.

They have active communities, abundant resources, and the flexibility to be used in diverse environments.

Data Preprocessing and Visualization Tools

Libraries like Pandas, NumPy, and Matplotlib in Python simplify data preprocessing and visualization.

Apache Spark and Hadoop help handle big data, while Tableau and Power BI offer robust data visualization capabilities.

Model Deployment and Monitoring Tools

Tools like Kubernetes and Docker assist in deploying and managing models in production, while MLflow and TensorBoard help monitor model performance and visualize results.

Vendor Evaluation: Criteria for Selecting Vendors, Software, and Service Providers

Choosing the right vendors, software, and service providers for your AI and ML initiatives is a critical aspect of your journey. These choices can significantly impact your project’s speed, quality, cost, and long-term sustainability. Here are some key criteria to consider during the evaluation process:

Relevant Expertise

Evaluate if the vendor has a proven track record in delivering solutions in your industry or solving similar problems. Look for case studies, customer testimonials, and independent reviews demonstrating their capabilities.

Technology Compatibility

Assess if the vendor’s technology stack is compatible with your existing infrastructure and workflows. This includes considering programming languages, integration capabilities, and support for your preferred ML frameworks or methodologies.

Scalability

As your AI/ML initiatives grow, you’ll need solutions that can scale with your needs. Ensure the vendor’s solutions can handle increasing data volumes, complex models, and growing user demands.

Security and Compliance

Review the vendor’s security protocols and compliance certifications. If you’re in a heavily regulated industry, confirm that the vendor can meet industry-specific compliance requirements.

Cost and Value

Consider the total cost of ownership, including upfront costs, subscription fees, implementation costs, and any costs related to maintenance, support, or upgrades. Assess the value provided in terms of increased efficiency, improved decision-making, or revenue growth.

Ease of Use and Adoption

User-friendly software and platforms can accelerate adoption among your team members. Consider factors like user interface, learning curve, and availability of training resources.

Support and Service

Good vendor support can significantly impact your AI journey. Look for providers that offer strong technical support, helpful documentation, and consulting services to guide you in implementation and problem-solving.

Innovation and Future-Readiness

The field of AI/ML is constantly evolving. Choose a vendor that demonstrates a commitment to innovation, regularly updates its offerings, and is likely to keep pace with future trends and technologies.

The right vendors and service providers should align with your needs, goals, and constraints. Comprehensive evaluation and thoughtful selection can save time, reduce costs, and improve the outcomes of your AI/ML initiatives.

Key Concepts: Choosing the Right Tools

- AI/ML Pipeline: Learn the tools required for each stage.

- Tool Selection: Choose tools that align with your needs while ensuring scalability and security.

- Option Exploration: Consider a wide range of tool options, from open-source to comprehensive platforms.

6. Understanding Costs and Developing a Budget

Building a realistic budget is a cornerstone of successful artificial intelligence/machine learning project management. However, the costs associated with AI/ML initiatives are not always immediately apparent.

This section is dedicated to helping you understand the various costs involved, how they may apply to your organization, and how to develop a comprehensive budget that covers all potential expenditures.

From direct costs such as hardware and software purchases to indirect costs like personnel training and data management, we’ll delve into the financial considerations you need to keep in mind on your AI journey.

Cost Breakdown

Understanding the key components of your AI/ML initiative’s cost structure is crucial for building a realistic budget and ensuring long-term financial sustainability. The three major areas where you can expect to incur costs are data storage, computational resources, and human resources:

Data Storage Costs

The vast volumes of data used in AI/ML projects must be stored securely and efficiently. Storage costs will depend on several factors:

Volume of Data

As the volume of data increases, so does the storage cost. Consider the size of your current data and the potential for future data growth.

On-Premises vs. Cloud

On-premises storage incurs upfront capital expenses for hardware and installation, plus ongoing costs for maintenance and upgrades. On the other hand, cloud storage is typically billed monthly based on usage, leading to operational expenses.

Data Management

This includes costs associated with data organization, indexing, and retrieval, as well as data backup and recovery systems.

Computational Resources Costs

AI/ML models, especially deep learning models, require substantial computational power for training and inference.

Hardware

Depending on the complexity of your models, you’ll need CPUs and potentially GPUs or TPUs. The cost can vary significantly based on the type and number of processors needed.

Cloud Compute Services

If you’re using cloud-based compute resources, costs will be based on the amount of compute time used, the type of machine instances selected, and whether you’re using spot instances or reserved instances.

Human Resources Costs

People are often the most significant investment in AI/ML projects.

Hiring

This includes the costs of recruiting, onboarding, and compensating new staff, such as data scientists, machine learning engineers, data engineers, and project managers.

Training

If you choose to upskill your current staff, you’ll need to budget for training programs, courses, workshops, and other educational resources.

Retention

Retaining talent in the competitive AI/ML field can require investments in competitive salaries, benefits, and professional development opportunities.

Understanding these costs is the first step in developing a comprehensive budget for your AI/ML initiatives. You must plan for these expenses and monitor them closely to ensure your project remains on track financially.

Budgeting: Tips and Guidance for Developing a Budget for AI/ML Projects

Creating a comprehensive and realistic budget for AI/ML projects is crucial for ensuring the project’s financial feasibility and long-term success. Here are some tips and guidelines to consider while developing your budget:

Include All Categories of Expenses

Make sure to account for all possible costs, including data storage, computational resources, human resources, and potential miscellaneous costs like software licenses, consulting services, and training.

Estimate Costs Conservatively

It is better to overestimate costs than to underestimate them. AI/ML projects often have unforeseen challenges that can lead to additional expenses.

Plan for Scalability

Consider the costs of scaling up your AI/ML initiatives in the future. As your data grows and your models become more complex, both storage and computational costs could increase significantly.

Factor in Maintenance and Upgrades

AI/ML isn’t a one-time investment. There will be ongoing costs for system maintenance, software upgrades, and model retraining to keep your systems current and effective.

Set Aside a Contingency Fund

A portion of your budget should be set aside to cover unexpected costs. A commonly recommended amount is between 10% and 20% of the total budget.

Consider Return on Investment (ROI)

While it is important to understand costs, remember to consider the potential returns. Improved efficiency, reduced errors, new revenue streams, and better decision-making can all provide substantial ROI.

Review and Adjust Regularly

Budgets are not set in stone. Regularly review and adjust your budget based on actual expenses and changing project needs.

Budgeting for AI/ML projects involves careful planning, conservative estimating, and regular review. It is vital to your project’s success and can help ensure you have the financial resources to carry your initiative from conception to completion.

ROI Estimation: How to Estimate and Maximize Return on Investment

Estimating the ROI for AI/ML projects can be complex due to the wide-ranging potential benefits and the often-indirect impact on revenue and cost savings. Despite this complexity, understanding, and tracking ROI is critical to making informed decisions and justifying your investment in AI/ML.

Here are some steps to estimate and maximize ROI:

Define Clear Objectives

Start by defining clear, measurable objectives for your AI/ML projects. These could be increased efficiency, reduced errors, cost savings, increased revenue, or improved customer satisfaction. A clear understanding of your goals will enable you to measure your success more accurately.

Identify Key Performance Indicators (KPIs)

Determine the KPIs that align with your objectives. KPIs include metrics such as processing time, error rates, sales volume, or customer churn rate. You will use these KPIs to measure the impact of your AI/ML initiatives.

Establish a Baseline

To understand the value your AI/ML project is creating, you need to compare its performance to a baseline. This baseline should reflect your organization’s performance before implementing the AI/ML project.

Estimate Direct Benefits

Calculate the direct financial benefits of your AI/ML projects. These could include cost savings from process automation, increased sales from improved customer targeting, or additional revenue from new AI-powered services.

Consider Indirect Benefits

AI/ML projects can also deliver significant indirect benefits, such as improved decision-making, increased customer satisfaction, and enhanced reputation. While these may be harder to quantify, they should be considered part of your ROI estimation.

Calculate Costs

Add up all costs associated with your AI/ML projects, including data storage, computational resources, human resources, and other relevant expenses.

Calculate ROI

The basic formula for ROI is (Net Profit / Cost) * 100, where Net Profit is the Direct Benefits minus Costs. However, make sure to also consider the value of the Indirect Benefits in your overall assessment.

Maximize ROI

After your initial implementation, look for ways to maximize ROI by scaling successful models, optimizing resources, and continuously refining your AI/ML strategies based on performance data.

While estimating ROI for artificial intelligence/machine learning projects can be challenging because of the range of direct and indirect benefits, it is a critical part of evaluating the success of your AI/ML initiatives. With careful planning, tracking, and optimization, you can maximize your ROI and make a compelling case for your investment in AI/ML.

Key Concepts: Understanding Costs and Developing a Budget

- Cost Identification: Recognize all direct and indirect AI/ML costs.

- Cost Assessment: Evaluate each cost’s potential impact on your organization.

- Budget Plan: Construct a comprehensive budget covering all potential expenditures.

7. Developing a Roadmap

Building an AI/ML-capable organization isn’t a task that can be achieved overnight. It requires a strategic plan, a defined pathway that lays out each step toward achieving AI and ML capabilities.

This section is dedicated to guiding you through the process of developing a well-structured roadmap for your artificial intelligence/machine learning journey. We’ll delve into setting realistic timelines, understanding dependencies, considering potential risks, and sequencing your steps effectively.

With a clearly defined roadmap, you can navigate the complexities of AI/ML implementation with confidence, ensuring that each part of your organization is synchronized in working toward your defined AI/ML goals.

Goal Setting: Setting Realistic and Achievable AI/ML Goals

Before embarking on your AI/ML journey, you must have a clear understanding of what you want to achieve. Setting realistic and achievable goals provides direction for your project, motivates your team, and serves as a basis for measuring progress and success.

Here’s how to set realistic and achievable AI/ML goals:

Identify Your Business Needs

Your AI/ML goals should align with your overall business objectives and address specific business needs. Start by identifying the problems or challenges in your business that AI/ML can potentially solve.

Apply the S.M.A.R.T. (Specific, Measurable, Achievable, Relevant, Time-Bound) framework to your goal setting. For instance, rather than saying, “We want to improve customer service,” a S.M.A.R.T goal might be “We want to reduce customer service response times by 25% using AI-powered chatbots within the next 12 months.”

Be Realistic

While AI/ML has a vast range of applications, it is essential to keep expectations realistic. Not every problem can be solved with AI/ML and achieving results can take time. Set goals that challenge your organization but are still within reach.

Consider Available Resources

Your goals should reflect the resources (data, talent, budget, etc.) available to your organization. Overambitious goals could lead to resource strain and project failure.

Set Short-Term and Long-Term Goals

Short-term goals can help keep your team motivated and provide early indications of progress, while long-term goals provide the broader vision guiding your AI/ML efforts.

Iterate and Refine

Remember, goal setting is not a one-time task. As your project progresses and your organization’s capacity to leverage AI/ML evolves, you’ll need to iterate and refine your goals.

Goal setting is a critical first step in developing your AI/ML roadmap. By identifying your business needs, applying the S.M.A.R.T framework, being realistic, considering your resources, and setting both short-term and long-term goals, you can chart a clear and achievable path toward AI/ML success.

Milestones and Timelines: Planning Key Steps and Creating a Project Timeline

Creating an effective timeline for AI/ML initiatives involves planning key steps, setting milestones, and ensuring all parts of the project are synchronized.

Here’s how to plan your AI/ML project milestones and timelines:

Outline Key Steps

Begin by outlining the key steps in your AI/ML project. These may include defining goals, assembling a team, data collection and preprocessing, model development and training, testing and validation, deployment, and evaluation.

Set Milestones

Milestones are significant events or achievements that mark the completion of major project phases. These can be useful for tracking progress, maintaining momentum, and providing a sense of achievement for the team.

Examples include finalizing your AI/ML project team, completing the data collection phase, or deploying the first model.

Establish Timelines

For each step and milestone, set a timeline. Consider potential delays and challenges and be realistic about how long each phase will take. Certain steps in AI/ML projects, such as data collection and preprocessing or model training, can be particularly time-consuming.

Sequence Tasks Effectively

Some tasks will depend on others, so it is important to sequence them effectively. For example, model development cannot begin until data collection and preprocessing are complete. Use a project management tool to visualize your project timeline and dependencies.

Allow for Iteration

AI/ML projects often involve iteration, where models are tested, refined, and retested based on results. Make sure to include time for these cycles in your project timeline.

Regular Reviews

Schedule regular review points at which you can assess progress, adjust timelines if necessary, and celebrate successes. This will help keep the project on track and maintain team morale.

Risk Management: Identifying Potential Risks and Developing Mitigation Strategies

In AI/ML projects, as with any technological initiative, various risks can arise that may impact the project’s success. Effective risk management involves anticipating these risks, evaluating their potential impact, and developing strategies to mitigate them.

Here’s how you can approach risk management in your AI/ML projects:

Identify Potential Risks

The first step in risk management is to identify potential risks.

In AI/ML projects, these could range from technical challenges, such as data quality issues, model overfitting, and algorithm bias, to operational and strategic risks, such as budget overruns, project delays, and misalignment with business objectives. External risks, such as regulatory changes or market shifts, should also be considered.

Evaluate Risk Impact

After identifying potential risks, evaluate their possible impact on your project. This can be done by considering both the likelihood of the risk occurring and the severity of its potential impact. This evaluation can help prioritize risks.

Develop Mitigation Strategies

For each risk, develop strategies to either prevent the risk from occurring or reduce its impact if it occurs.

For instance, to mitigate data quality issues, you might implement stringent data cleaning and preprocessing steps. For project delays, you might allocate buffer time in your project schedule.

Assign Risk Ownership

Assign each risk to a team member or a group within the organization who will be responsible for monitoring the risk and implementing mitigation strategies.

Regularly Review and Update Risk Management Plan

Risk management is not a one-time activity but an ongoing process. Regularly review your risk management plan, update it with new risks, and adjust mitigation strategies as necessary.

Prepare for Contingencies

Despite your best efforts, some risks may still materialize. Therefore, it is essential to have contingency plans in place to manage these situations.